The $9 Billion Market Built on Junk Science

Key quote:

“There are — I mean, literally, at this point — hundreds and hundreds of studies involving thousands and thousands of people to show that when it comes to emotion, variation is the norm. The idea that emotions can be objectively measured or analyzed at all, in other words, is fantasy.”

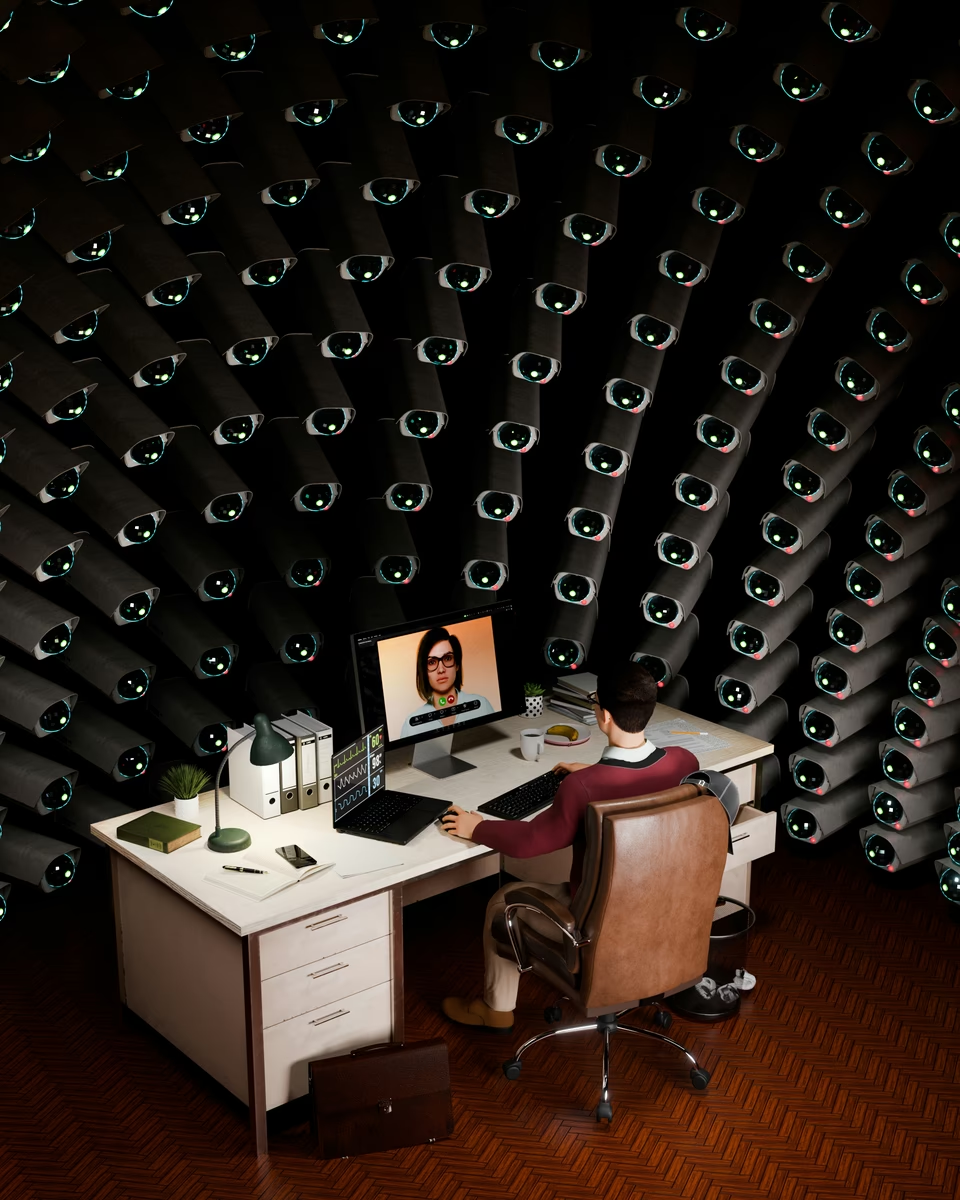

Why it matters: A $9 billion market is being built on the assumption that a computer can read your face and know what you’re feeling. The problem’s that it can’t, and the Atlantic’s Friday longread is a nice overview but missed a couple details.

The global emotion-AI market is on track to triple by 2030. MetLife, Burger King, and McDonald’s have already deployed it. Burger King named their headset chatbot “Patty”, and she evaluates employees for friendliness. This is happening now.

But the science doesn’t support the product. The industry leans on Paul Ekman’s theory of six universal basic emotions, a framework challenged for decades. Neuroscientist Lisa Feldman Barrett puts it plainly: people scowl when angry only about 35% of the time. An AI watching for scowls misses two-thirds of actual anger and flags half of all scowls as anger when they’re not. A 2026 peer-reviewed study by Westlin and Barrett found that stimuli assumed to reliably evoke a single emotion met “even lenient benchmarks” an “exceedingly low” proportion of the time. In plain English terms, the training data are statistically unsound.

The EU banned emotion AI in workplaces in February 2025, with enforcement provisions ramping through August 2026 (or maybe December 2027 if that provisional agreement becomes final) and fines up to 35 million euros or 7% of global turnover. MorphCast, founded in Florence, responded by moving, because the U.S. has no equivalent federal restriction, and among the states, Illinois is the lone exception. Its Biometric Information Privacy Act treats facial emotion detection as biometric data, requires written consent, and allows private civil actions. That’s the strongest enforcement tool in the country, and it wasn’t even written with AI in mind.

Meanwhile, 65% of Americans say the government has done too little to regulate AI, according to a February-March 2026 Annenberg survey of 1,330 adults, and that’s across party lines. AI regulation is the least polarized policy area they tested. People aren’t numb on this, and they want guardrails.

For employers, the maths should be straightforward. In Illinois, BIPA exposure is real and expensive, so if you’re in Illinois, maybe don’t? Everywhere else, the legal risk of deploying technology that discriminates, like the ACLU’s 2025 complaint against HireVue and Intuit over a deaf employee’s denied promotion demonstrates, should give pause, because we’re going to see novel application of other laws against this technology that will be a drag on revenue and reputation. And in a labor market where hiring is already hard, spending money on tools that make hiring harder, slower, and more legally precarious seems like a misallocation of resources.